Today’s blog post is part of our guest blogger series, and is written by Eugene Lazutkin.

Almost any Java programmer, who starts to study JS Grokking, its OOP facilities, and a dynamic nature of JS, thinks that they can be greatly improved and start their own OOP library/helpers. The majority of these libraries

Almost any Java programmer, who starts to study JS Grokking, its OOP facilities, and a dynamic nature of JS, thinks that they can be greatly improved and start their own OOP library/helpers. The majority of these libraries

are left forgotten when their authors learn more JS, yet some grow to fruition and end up being marketed. This article is dedicated to such people.

The goal of this article is to go over common OOP techniques suitable for JS, their pitfalls, problems, and trade-offs.

Warning: this article is not for beginners. You have to be familiar with OOP at least in one language, and have good understanding of builtin OOP in JS.

Why OOP?

People who hear me talk about programming techniques, or read my articles and slides, know that I think that OOP is frequently overused and abused, and that we are missing good practical alternatives. OOP is a very important tool when used correctly. If you decide to use it, make sure that you don’t cripple it in some way.

Single inheritance OOP: what is available?

First of all, OOP is a set of concepts, and any existing language implements some subset of it. It doesn’t make sense to declare one language “purer” then another, or enshrine one particular implementation as canonical.

The foundation of OOP is an object (not class, not constructor, not any other thing), which encapsulates state, and defines methods to deal with an object. Yes, OOP is a branch of the imperative programming. It provides a neat way to partition/structure your program state into manageable pieces, thus reducing overall complexity. It has nothing to do with pure functional programming or any other stateless techniques.

Other things, which are usually associated with OOP, like classes, constructors, interfaces, and so on, are optional. Some techniques work best when applied statically, yet absolutely unpractical in dynamic languages for performance reasons. Good example would be interfaces. In many cases simple duck-typing will do.

Let’s start with single inheritance and work our way up.

Classes and factories

One of the major principles of programming is eliminating repetitive tasks. We would not go on for very long without loops or if we had to produce each and every object by hand. That’s why we classify objects (in a philosophical sense) and provide simple ways to mass-produce them according to their classes/categories.

Compiled languages usually rely on “classes,” which serve as “recipes” for a language to verify and produce new objects. The other frequently used alternative is to provide special entities called “factories,” which can produce new objects. Factories can be objects, or functions. Essentially this is what JS uses — a function called “constructor.” Calling this function repeatedly we can mass-produce similar objects.

Object invariants

One important rule/convention is to observe proper invariants on an object. In most cases it is a validity requirement for an encapsulated state. For example, we may require that every valid object define a member variable “height” with a valid interval from 0 to 10 including all floating numbers. It cannot be -1, undefined, null, “HaHa!”, or []. In the real world we usually have our states span across several member variables, and validity rules are compound involving several member variables at once, or even depend on external resources.

When objects come to life it is usually invalid. It is a constructor’s job to make it valid given optional parameters. A good rule of thumb is to make sure that all public methods leave an object in the valid state. If for some reasons our object becomes invalid, it means that it is ready to be recycled. One valid way to achieve that is to run a destructor — the only method that may leave our object completely destroyed.

Inheritance

Another major principle of programming is code reuse. While eliminating repetitive code with loops or recursion helps, it is not enough — it doesn’t cover all possible ways of reuse. We want to be able to build huge programs with a relatively small number of different building blocks, otherwise we will be flooded with complexity. OOP claims to have such a mechanism. It is called an inheritance. With this tool we can define common features (a state as well as functionality encapsulated in methods), and specialized those features in child objects/classes by adding new features. No need to repeat that code again — we just reference it. And we can reuse our base, and our children as much as we want leading to a graph of classes/objects.

While statically compiled languages have inheritance mechanisms built-in, JS allows to manipulate prototypes and delegate a functionality to an arbitrary object.

Life-cycle and construction

With inheritance we inherit more than a state and corresponding methods. Specifically we inherit all associated object invariants (e.g., validity of an object). These invariants are layered and in general should not be violated by derived objects/classes. So if we have an object C, derived from B, derived from A, it should be a valid object C, a valid object B, and a valid object A (obviously we can ignore it in some cases, but this is a common convention, which has served us well since the 60s).

More than that, when constructor B starts, our object should already have a valid object A, when constructor B is finished we have a valid object B, and so on. The same goes for destructors (see below) but in an opposite order: when destructor B is done our object should still be a valid object A.

In order to achieve that we have to construct an object properly. In most cases it is done by chaining constructors starting from the base-level of an inheritance chain. In our example, when an object is created, we should run A(), then B(), then C() in that specific order building our object in layers according to a recipe we have.

So we have a problem here: JS has constructors, it has prototypes to implement the single inheritance, yet it doesn’t chain inherited constructors for you. You have to do it yourself. Obviously this practice is error-prone, and this is our first requirement for an OOP tool: chain constructors automatically, it is not rocket science, it is purely mechanical process.

Life-cycle and destructors

There is a lot of confusion about destructors. The main idea is to have a counterpart to a constructor, which will “destroy” an object. Why do we need it? It ensures that all resources allocated to an object are properly disposed of: memory is freed, files are closed, network sockets are released, event sources are unsubscribed for, and so on.

Modern languages are usually garbage collected, so we don’t need to worry about memory per se, but other resources are still there and should be dealt with appropriately. Java has it in a form of finalizers.

One misconception with destructors is that many programmers think that in our GC world only physical resources (files, network, USB devices, and so on) should be released. Unfortunately it is not so.

There are several categories of resources, which are pure memory, yet should be taken care of. Examples:

- Some objects insert themselves in lists/structures kept by other objects. Imagine that you don’t need your object anymore, yet it’s included on a long-lived list — your object will not be garbage-collected until that list dies. If your object did some event processing with events coming from that list, it will continue to do so, consuming resources and potentially breaking your program.

- Some objects employ buffering techniques to accumulate data before passing it to physical objects (files, network sockets), or memory-based entities. Garbage-collecting such object without flushing a buffer may lead to corrupt data.

“But JS doesn’t have a concept of finalizer/destructor, right?” True. With garbage-collected languages the moment of collection is not well defined, so in many cases we cannot rely on a finalizer — we have to do it manually. Imagine that we “lost” a file object relying on the fact that its finalizer closes the file, and it does, but 2 days later. Not what we expected. So in many cases we have to call such a finalizer manually.

Just like constructors, destructors should be chained but in a reverse order — which is the only way to preserve invariants. In our example, it would be C.destroy(), then B.destroy(), then A.destroy() (obviously missing methods should be skipped). Again, JS doesn’t help here (ditto Java with its finalizers), and we have to chain inherited finalizers manually. Obviously this process is error-prone, yet stupidly simple, and can be easily automated. Unlike constructors destructors are relatively rare so an optional requirement would be: chain destructors/finalizers automatically.

Destructors and specialized cleanup methods

What if I already have a method to do a cleanup? For example, in my file object I use the close() method. Is it OK to have both?

I would say that both can be present, but personally I would expect that after close() I can still use the object, e.g., call open() on it, while after calling a destructor that object is as good as dead.

Having a common destructor helps people — there’s no need to remember object-specific verbs. It helps to create a reusable components like various containers, which may destroy its content when required without going into details about contained objects.

Life-cycle and internal events

We can look at constructing/destroying an object as events sent to this object processed by the AOP techniques. One event causes an object to be constructed with “event processors” called this way: A() => B() => C(). Another event causes an object to be destroyed/finalized with “event processors” called this way: C.destroy() => B.destroy() => A.destroy(). The former chain is the “after” chain in the AOP lingo, while the latter chain is the “before” chain.

Are there any other chains? Not in C++ and not in Java. But CLOS allows these chains for other methods as well. Imagine that we have events on any (major) state modification. It turned out that in many cases we can decompose our methods in such a way that we can combine them using either “after” or “before” mechanisms — whatever preserves our invariants. How do we know which one to use? It is simple:

- If each method modifies only its own state using information from parameters and its base object, then “after.”

- If each method modifies its state and the state of its base object (preserving invariants), then “before” seems the correct choice.

While most major languages do not support chaining directly for general methods, personally I like it. If it is already implemented for constructors/destructors (e.g., in C++), it is trivial to extend it to other methods.

Obviously not all method calls can be decomposed like that. What should we do?

Super calls: calling super

A more generic mechanism is the ability to call a base method. Remember above when we were talking about chaining methods manually? This is it — error-prone, yet the only available mechanism to do so.

The idea of this mechanism is that your underlying method (the method that was overridden) is a black box. We know how to call it, and what it returns, but we don’t know how it does that. Imagine that it does almost what we need, but we have to correct it a little. In this case in our new method we tweak parameters/state a little, call our base method, and possibly tweak its result, and the state again before returning. In AOP lingo it is called “around” advice. That base method in turn can use the same technique piggy-backing on its base, and so on, or can be a completely original method.

We can even simulate “after” and “before” chaining by skipping the initial tweaking or the final tweaking respectively.

At this point many programmers ask: “why bother with chaining at all if we have powerful super calls?”. Two reasons:

- This stuff is manual and consequently error-prone.

- Usually chaining is cheaper performance-wise than (recursive) super calls in a dynamic environment. Obviously this claim depends on implementation, which in turn depends on trade-offs.

Another fine point — there are two ways to call a method from a base class: call it directly by name, specifying a name of a base class/object (a-la C++), or call it indirectly, something like “call a method I overrode with this method, but from (one of) the base object.” Is it really important? You bet!

Back to single inheritance

So in a single inheritance paradigm, newly derived objects can add new chunks of state (e.g., new member variables), new methods to work specifically with this new state, and can modify behavior of existing methods. Internally such object can use an API provided by its base object to achieve its objective, yet keep the complexity down.

In order to do that we need facilities/helpers described above: automatic chaining for certain methods (most notably constructors), and super calls.

OOP fail #1

Unfortunately, single inheritance OOP fails spectacularly in the code reuse department. A fish, a duck, a hippo, and a submarine can swim. Does it mean that they have a common swimming ancestor from which they inherited their swimming prowess?

In the real world the answer is “no.” But in the world of single inheritance the answer can be “yes,” and as a result we have some top-heavy hierarchy, where top classes have all possible features, and usually those features are inactive, so they can be activated/implemented when needed.

What if the answer is “no”? In this case we have to implement similar (or the same functionality) in different classes/objects by repeating it or by proxying it manually.

So what are we to do? There are two major ways about it: cross-cutting concerns of AOP, and multiple inheritance. Let me be crystal clear: the latter means “inheritance of implementation”, not just “inheritance of interface” or anything else — ultimately we want to improve our code reuse.

OOP fail #2

Everybody knows a joke about “Hello, world!” in Java, e.g., take a look here — a programmer is forced to create a class with a static method, which will be used to run a program. Obviously that class is completely bogus and never used to create instances. Everybody smiles, but smart people say that “Java is not for simple programs” assuming that it kicks ass on big programs. Let me translate it for you: Java, or more specifically OOP, doesn’t scale down. You are better off with imperative or functional techniques when writing small programs or small components.

What does it mean for JavaScript programmers? It means that even on mobile browsers, the size of the OOP packages we use is not that important when compared with size of functional or imperative helpers.

When we do need OOP, our program is big enough already, and what we need is a robust fast implementation of OOP features we use. The same goes for AOP, which is an OOP companion, but it is even less size sensitive, because it becomes a player in even bigger programs.

Multiple inheritance

Multiple inheritance is a feature when an object can inherit its properties and behaviors from more than one path. Effectively an inheritance diagram ceases to be a tree and becomes a DAG.

With this paradigm a composite object will contain chunks of state, but they are not layered anymore. So how does it change a life-cycle? It doesn’t really. All techniques we discussed above are still valid. Everything we mentioned about invariants still applies.

For example, what does it mean to inherit from two other objects? Does it mean that we will call both constructors in parallel? No. Our code is still single-threaded and constructors are called one after another. It implies that we sorted them out in some fashion and use single inheritance mechanisms to call them.

This linearization can be done indirectly by walking an inheritance DAG, or directly, by flattening the DAG once and converting it to a list. In any case when we have a list, we can apply all single inheritance techniques.

DAG linearization

When we can linearize a DAG, we can call constructors in a proper order, we can:

- call destructors/finalizers in a proper order

- weave “before” and “after” chains

- determine what object is “super” of any particular instance

- exclude duplicate bases, which are common for multiple inheritance.

Obviously we can walk a DAG every time we need to do something mentioned in a previous paragraph, but it may become a performance bottleneck. In any case if we want to linearize it statically or dynamically we should come up with an algorithm first.

Such algorithm exists and it is called C3 MRO, which was implemented in Dylan, Python (since 2.3), Perl 6, and Parrot. I know some people think of it as an “ambiguous magical conflict resolution.” If that is what you think, run, don’t walk, and read on Toposort, which was discussed in a CS class right before Bubble Sort. It is short and sweet, with linear complexity. Yes! Big O(N + E), where N is a number of nodes (inherited classes/objects) and E is a number of inheritance links/edges between them, usually ~N in our case. I saw some homegrown implementations of sorting/walking in the wild with a quadratic complexity O(N^2) — e.g., dojo.declare() had a walker like that in Dojo 1.0.

By the way, is it possible to use different ordering for constructors and for method calls? Theoretically it is possible but nobody does it in practice — it is very confusing.

Back to single inheritance?

Does it mean that we are back to single inheritance then? Yes. JavaScript doesn’t provide any mechanisms for multiple inheritance so this is the best we can do implementation-wise.

But what techniques are specific to multiple inheritance? There is the old time-proven OOP technique called mixins, and there is a new (~10-15 y.o.) technique called traits. The biggest difference between them is how to integrate a new piece of functionality/state in an object.

Strictly speaking some authors separate multiple inheritance and other methods we are going to talk about, and some consider them extensions of MI. It is unimportant for our discussion. Let’s take a quick look at them.

Mixins

Mixins are classes or objects that usually cannot stand on their own because they use facilities of their host. They are used mostly to add new functionality to existing classes/objects with a minimal code. Usually they are specially designed as a part of a hierarchy, so they know what APIs they can use, and what APIs can be changed and how.

When mixins are combined they intentionally reuse life-cycle facilities of host objects. Name clashes are intentional, and resolved by chaining, or super calls.

Toy example

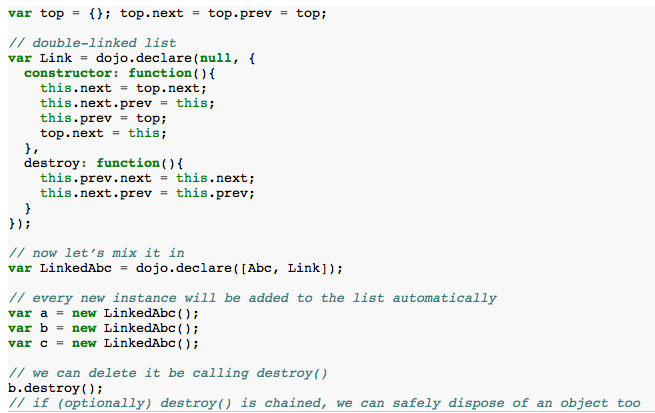

Imaging that one of a frequent operations I have is to keep objects of various kinds in a list, so later I can use this information for debugging (to print all objects), or for serialization, or for advanced control of some top widget, or for any other needs. I can create a mixin for that (using dojo.declare):

As you can see that mixin is very easy to use, it relies solely on life-cycle methods (constructor and destroy) and presents no API on its own. Obviously we can add convenience methods, if the need arises.

Complex situations

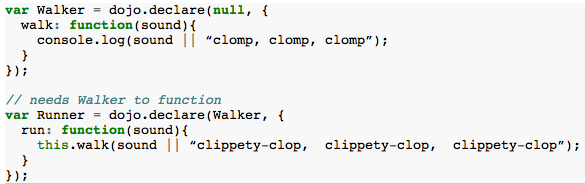

What if I need one mixin to work together and in presence of the other mixin? Is it possible to combine mixins? Yes. The resulting object is a mixin too. Simple example:

You can see that this simple example defines to two constraints: Walker is required by Runner, and Walker should be mixed in before Runner — order can be important for preserving invariants.

Now we can mix in Runner, or Walker as we deem appropriate. As you can see these particular mixins do not have their own state and do not participate in a life-cycle at all. What if we want to add a Runner to an object that already has Walker in it? This situation is normal. We should remove a duplicated base and make sure that Walker is defined before Runner in order to obey all object invariants.

Environment anonymity

Because mixins do not know exactly what are other objects/mixins/classes in a hierarchy of any given host object, they cannot assume any class names and should rely only on anonymous calls. Chaining is an anonymous mechanism because it doesn’t require a mixin to call other methods directly. Super calls should be done in an anonymous manner as well. Access to common resources provided by its host object should be done strictly using a handle (“this”) of this object.

Mixin problems

While mixins or some related concepts are used by programmers for a long time, we learned about their problems too. One problem is that sometimes a mixin overrides an unrelated method or a data member with the same name. For example, method run() above overrides another method run() with a different semantics — what should we do? Obviously such a situation is an error, and should be dealt somehow, e.g., by renaming the offending method statically or dynamically.

In general, such naming problems force to design mixins together with the hierarchy. It works in practice but can make a maintenance of such code difficult, especially when fine details of the initial design are lost/forgotten.

OTOH, in my experience, I’ve met with method override bugs a handful of times. Only one of them was difficult to find/fix. So personally I don’t think that this is a big practical problem.

Traits

Traits are trying to fix mixins.

State clash

What if mixin defines a member variable that is already defined possibly with a different semantics? Easy: remove state. All traits should be completely stateless, and should not access any member variable directly.

Initialization order

What initialization? Because our traits have no state, we don’t need to initialize them at all.

Public and required lists

In general a trait can define a mandatory list of public methods (methods for external use), and an optional list of so-called required methods (methods required by the trait to function properly). The first list is mandatory because without a state this is the only way a trait can do something useful. The second list is technically optional, if a trait returns a constant, or encapsulates a stateless algorithm, in order to be productive, it has to define its required resources (methods).

Required methods are invisible to an end user. Some implementations make them public along with required names, while in accordance with founding papers they are parameters of a trait, not something available indirectly. Being non-public required methods cannot clash even if they are named identically — it is just a parameter name.

Method clash

What do you do if different traits define the same name? Is there a rule to combine/override them? No. All clashing names are simply removed from the final API.

Composition of traits

We already saw that we can compose mixins. Can we compose traits? Yes. The result is new trait with duplicate public methods removed, and required methods (partially) satisfied.

Object composition

So how can we have objects without a state? The classics (see the original article) tell us: Class = State + Traits + Glue. What does it mean? It means that you:

- Define a class/object with all required member variables.

- Because member variables should not be accessed directly, define getters/setters for them.

- Add all required traits.

- Specify what actual public method corresponds to any given required method. Think of it as a switchboard programming — connecting endpoints.

- For all missing required methods provide them in a glue code.

- If some public methods clashed and were excluded, but you still want them, provide them in a glue code.

- If some trait methods should be modified, override them from a glue code.

Precedence rules

Yes, there are precedence rules. A class/object level method (a glue code) overrides a trait method. A trait method overrides an inherited method.

Inheritance

From the previous rules you probably deduced that inheritance is supported. Yes, single inheritance can be used to form a state, and a glue code.

Trait problems

Traits are more complex than mixins. Their benefits, if any, will show up only for programs with high complexity. To me it goes almost like that: for low complexity problems try to use functional and imperative tools. If it doesn’t work anymore, try OOP with mixins. If it doesn’t work, try to add AOP. If it fails, try traits. With every new tool you will spend more time programming, yet the overall complexity of the project should be reduced — in fact this is the requirement when choosing tools and methodologies.

Traits were around for more than 10 years, still there is no major widely used language to support them natively. It doesn’t mean that traits are no good, but it does mean that we have less accumulated experience with traits than with other programming techniques.

“No state” and “no clash” restrictions require a programmer to be more involved (that’s the goal), and put a significant emphasize on a “glue code,” even more so than for mixins. Ideally that “glue code” will consist of name adapters and argument adapters, meaning that we will have more technical code to browse through, muddying the whole picture for a programmer. Do not forget that in JavaScript functional adapters do not come for free unlike Java or other statically optimized languages — this performance problem may change in the future with better compilers when the current crop of IE, FF, Chrome, Safari, and Opera are replaced completely. Say, in 5-10 years judging by our past performance in this area (I hope I am wrong).

In general traits target statically analyzed/compiled languages — otherwise we cannot enforce all trait constraints and rules. Consequently all current JavaScript implementations cut corners, add some homespun improvements, and generally migrate toward mixins with some exotic rules, crippling the idea of traits in the process. A great deal of a programmer’s cooperation is required, making the whole premise of traits moot.

OOP fail #3

It appears that OOP with its inheritance diagrams doesn’t scale up as well. There is nothing wrong with OOP per se. It is us people, who suck at building big hierarchies. Plato tried to classify the world around him, then his student Aristotle (who taught Alexander the Great in his turn) improved it … a little … in over 170 works, and that was deemed unsatisfactory by later philosophers. Don’t forget Carl Linneaus, who started the modern taxonomy of organisms, which is still updated on regular basis, and nobody expects this process to be finished any time soon.

Yes, these examples have scopes larger than most modern software projects, yet it explains the structural failure I saw on several occasions, where all intentions were good, everybody was on board, all available great minds were allocated to a warehousing project, and yet the results were dismal. What went wrong? The nature of the beast cannot be changed.

Do you really think that you can do better than those famous guys?

If this topic interests you, I suggest to start with these two papers: “An Aristotelian Understanding of Object-Oriented Programming” by Derek Rayside and Gerald T. Campbell, and “Classes vs. Prototypes – Some Philosophical and Historical Observations” by Antero Taivalsaari.

Summary

OOP is an important tool for writing libraries and large projects. In my opinion the practical sweet spot for OOP in JS is mixins. It means that we have to have ironclad tools to support this technique, and spend some time in designing our libraries and applications in a way that will take full advantage of mixins.

Because OOP works the best on non-trivial applications, trading off bytes for features, or cutting corners doesn’t look wise.

What are your thoughts on this topic? Share them in the comments section below!

Looking for more information like this? Check out other blog posts on this topic by clicking on the button below:

Eugene has worked with Base36 since January 2011. If you like what you read, you can check out more of his articles on his blog.

Thanks to Dmitry Baranovskiy for the use of his photo.

Thanks to Dmitry Baranovskiy for the use of his photo.